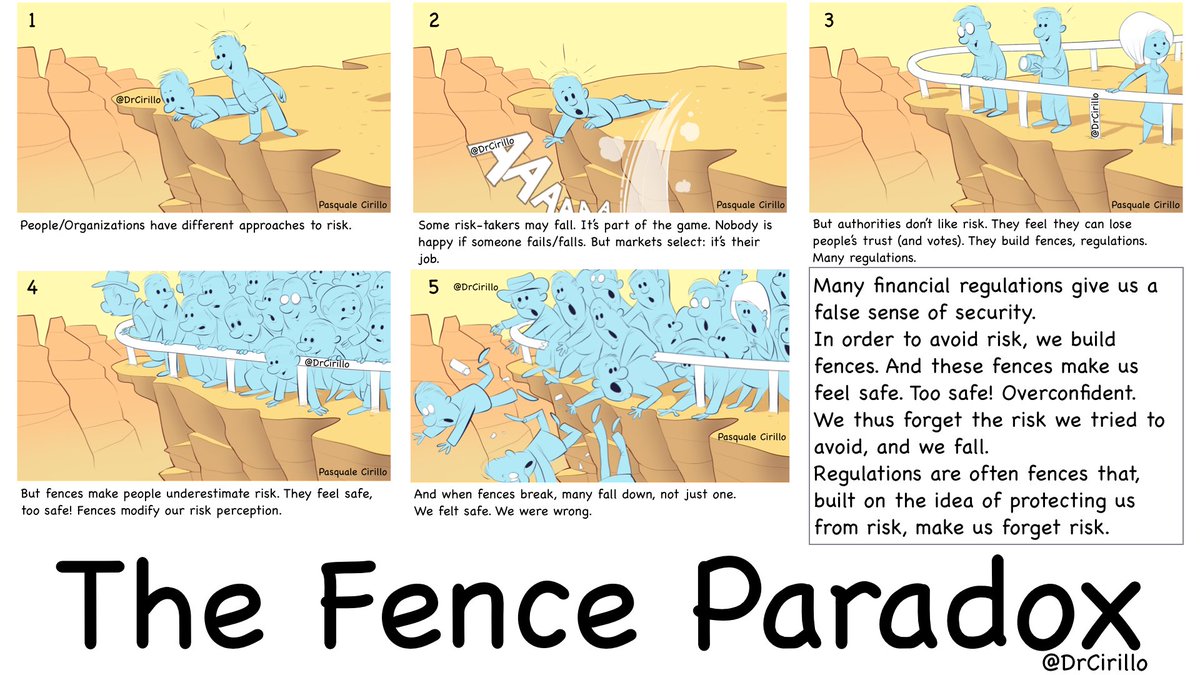

Pasquale Cirillo made a great visual explanation of the Fence Paradox.

The reason behind the Fence Paradox is a phenomenon called Risk Homeostasis, which states the following:

“Whenever a novel safety technology enables a person to choose between performing a task more effectively at the same perceived risk level as before or performing the task more safely at the same effectiveness level as before, he will choose the former.”

Case study: the ABS

For example, ABS (the automotive Antilock Braking System, which prevents the wheels of your car from blocking when you brake on a wet road) did not save any lives in the long run. Rather than allowing us to be safer on the road, it allowed us to go faster while maintaining the perceived level of safety constant. In particular, the ABS reduced the number of non-fatal crashes (those where vehicles were going slowly) but *increased* the number of fatal ones (most probably, because drivers felt safe because of the ABS and drove to higher speeds) [Source: US Department of Transportation]. Effectively, the ABS worked like the fence from the image above: by preventing the lightest incidents, it increased the perceived level of safety; the drivers responded by increasing their speed. Since high-speed incidents are much more likely to be fatal than low-speed ones, the ABS ultimately increased the overall number of deaths.

Simple fences

Fences (such as the ABS) tend to reduce the number of low-damage events and to increase the number of catastrophes, due to humans adjusting their behavior to keep the perceived level of risk constant. Technically speaking, fences fatten the tails of the distribution of incident severity, as humans transfer the benefits from safety to efficiency.

I define a “simple fence” a technology which:

- reduces the risk of a given hazardous behavior BUT

- also allows for a behavior adjustment which could allow the person performing it to be more effective on another dimension, while keeping the perceived level of safety constant.

Simple fences should be avoided because they increase the risk of catastrophe.

“Hey, aren’t fences useful? We just need people to use them correctly.” is the usual comment I hear. “This will never happen,” is my reply. Risk homeostasis is just too entrenched in human behavior.

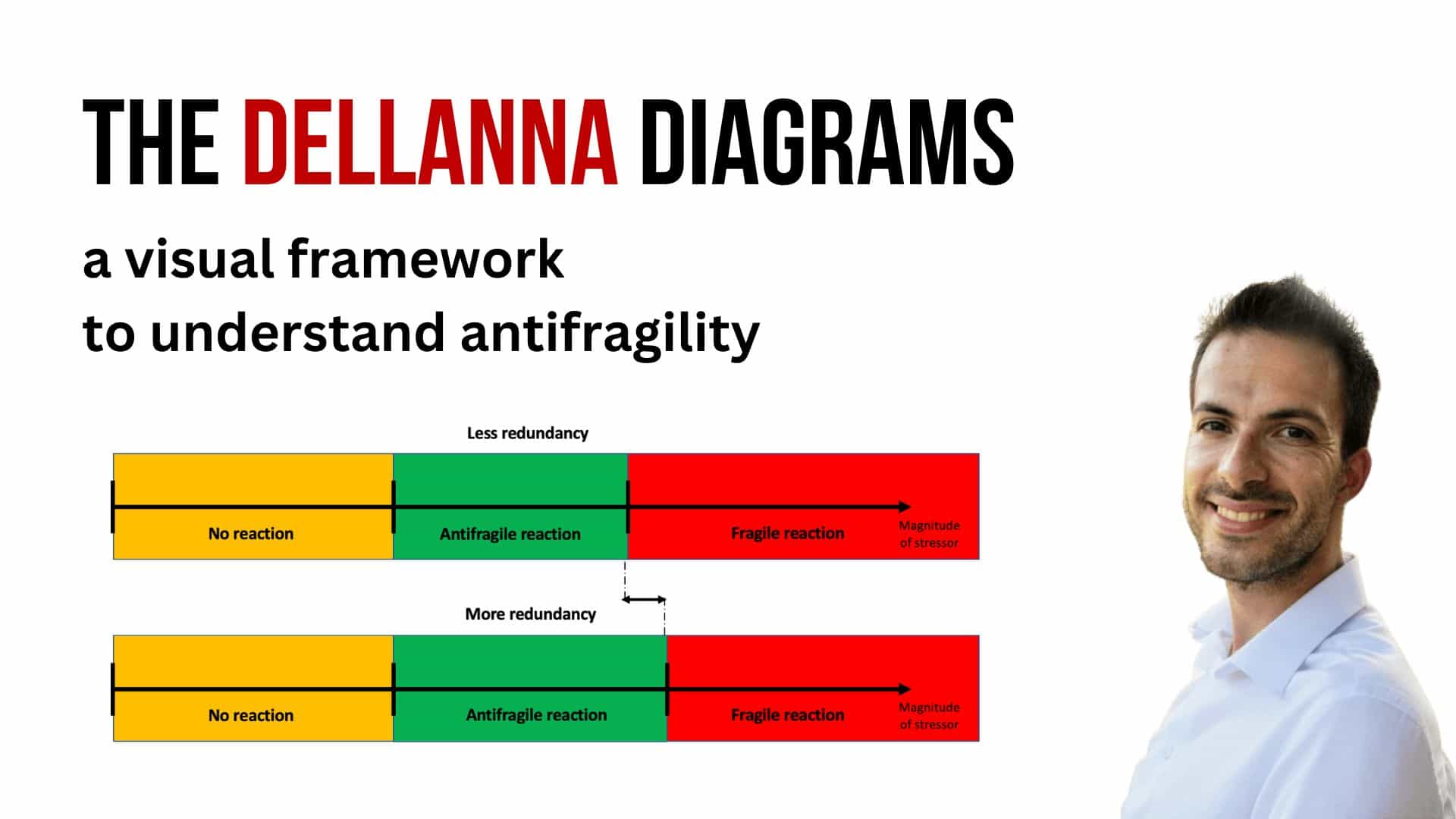

Electrified fences

A fence, to be effective, should not allow people to lean on it till it breaks. A fence should always be electrified: it should hurt, in a non-fatal way, people that attempt to rely on it to get an advantage over people who don’t. Electrical fences prevent both medium-sized negative events (a single person falling in the canyon) and catastrophes (lot of people pressing on the fence, breaking it, and falling in the canyon), at the affordable cost of slightly hurting those who try to rely on it by adopting a behavior which wasn’t possible before the fence was in place.

Electrified fences are better than simple ones, for they:

- reduces the risk of a given hazardous behavior AND

- do not allow for a behavior adjustment which could allow the person performing it to be more effective in another dimension.

I postulate that most simple fences should be substituted with electrical fences (or invisible mattresses; more on this later). People usually react by saying “Hey, the electrified fence hurts; why don’t we use a simple one instead? I promise I won’t lean on it.” History shows the opposite: if given a chance to lean on a fence, humans always end up using it, sooner or later. As gases always expand to fit the container they’re in, human behavior always expands to fit the boundaries of what’s acceptably safe.

Case study: motorbike helmets

Motorbike helmets work because they don’t allow bikers to rely on them not to feel *any* pain. If they slip and fall, the helmet will prevent a fatal head injury; however, their arms and legs are not as protected: any incident will be a painful one. Helmets work *because* they don’t prevent light or medium injuries; not *despite* of it. Helmets are electrified fences.

Overall safety

A common fallacy is to presume that increasing safety at any point of the incident distribution increases overall safety. However, risk homeostasis tells us that perceived risk is the constant; not human behavior. Therefore, increasing safety in a perceivable way in one area of the distribution will lead to a decrease of safety in another area. We achieve safety from catastrophes by *reducing* safety from light-consequences events.

Most safety policies fail because they aim to increase safety given a human behavior. They should instead aim to increase overall safety given adaptive human behavior.

They also fail because they aim to increase safety in a visible way (so that the policymaker can be celebrated). Doing so will lead to humans adapting to the visibly increased level of safety. They should instead invisibly increase safety.

I will push the concept even further: since the center of the distribution (the small incidents) is more frequent, and thus observable, than the tails (the catastrophes), policymakers should aim to decrease the visible level of safety (in order to increase the invisible one: catastrophes).

The attentive reader will notice the paradox: why would a policymaker, who relies on a democratic vote, to decrease a visible indicator? That’s one of the strongest obstacles towards increasing safety in our lives.

(Next week I will talk about the Fence Paradox in medicine. Subscribe to my mailing list in order to receive my essays directly in your inbox.)